Do you remember when GPT-3 first appeared for testing, and its features left the world with quite the excitement for what is to come next?

Those who have kept up with the general trends of artificial intelligence have come to understand that the wholesome gifts the OpenAI team passes to humankind are of great importance and tremendous implications.

To understand why GPT-4’s release is quite exciting to many, we should begin with the concept of artificial intelligence.

Table of Contents

Natural Language Processing

We have machine learning models and deep learning models nowadays.

These are based on algorithms developed by various brilliant minds of our current era that have been built on using code and fed with plenty of data generated by us, the public, and structured to specialize towards the concepts that we consider logical and familiar.

The end result of this is demonstrated through music, art and text that make sense to us.

You can check out Boomy, DALL-E 2 and GPT-3 for each of these extremely cultural concepts being simulated by machines.

We tell the machine to do something we have prior knowledge of and a certain expectation has to be met. If the machine does this at an acceptable level without requiring coding or any other extra nonsense, then it’s considered artificial intelligence.

For this reason, GPT-3 can be considered an AI in its own right.

It’s just that GPT-4 will be a better AI compared to its previous iteration, which we already consider to be exceptionally accurate and capable.

Exciting, no?

What Is GPT?

GPT stands for Generative Pre-trained Transformer and it’s a generative language model or in other words – it’s an AI model that writes by itself based on prompts inputted by the user.

Currently, GPT-3, is the most robust and accurate generator out in the market.

Before GPT-3’s launch, the largest NLP model was Turing NLG from Microsoft, with 10 billion parameters.

Comparing the aforementioned to GPT-3’s 175 billion, we can clearly see the tremendous jump in performance.

Once released, GPT-3 was an immediate hit in the eyes of tech entrepreneurs as copywriters, finetuned language models based on GPT-3, various AI writing services hit the roof in popularity and invention.

Of course, it’s only natural that when almost magical technological achievements happen, everyone jumps to utilize it to its absolute fullest potential.

GPT-3 can be used to write stories, essays, reports, dialogue, code, articles, research, answers, dialogues, questions, paraphrases and much more.

And with the advent of off-shoots, language models are now able to create machine generated text with narrative tone of voice, perspective, in-context storytelling and even conversations.

There is even a template for ‘break-up texts‘ generator.

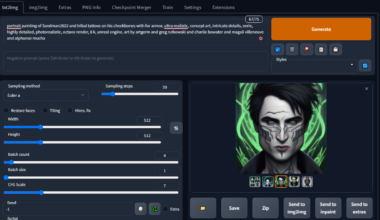

Various data scientists, researchers and IT gurus are digging deep into the inner workings of GPT-3, testing out which wording structure, which words, in what positions generate the correct or interestingly human results.

On another note, this straightforward name of Generative Pre-trained Transformer was given by the OpenAI team due to its specialization in natural language processing.

OpenAI is an AI research laboratory that was founded on December 11th, 2015 by various tech minds, who all collectively pitched in 1 billion USD.

Then, in 2019, OpenAI was granted another 1 billion investment by Microsoft.

What did they do with all that money? Why, of course, they made better AI language models with it.

What is GPT-4?

To answer this question, we must understand what GPT-3 is.

Then GPT-2.

Then GPT-1.

Like a movie, it’s important to have watched the preceding films in the series to understand the excitement for the next installment.

Pre-GPT, Natural Language Processing models were based on annotated data for a specific task. Highly specific, unable to handle generalized topics.

GPT-1 was published in 2018 with 117 million parameters and it was trained on unlabeled data with a focus on downstream tasks in variety like sentiment analysis.

GPT-2 was released in 2018 with 1.5 billion parameters and used a much larger dataset and other feature improvements such as Zero-Shot learning and task transfer (handling classes of data that it wasn’t trained on based on its own memory).

GPT-3 was released in 2020 with a whopping 175 billion parameters and this time around, it could handle code generation, language translation, text transformation, contextualization and much more.

GPT-4 is next in the series and it is scheduled to release within the year.

Practical Applications of GPT

The reason for GPT’s popularity is due to its unprecedented capability in manipulating and generating text.

Individuals all over the world use text generators based on GPT for various purposes. They can use it:

- For general purpose text generation when they need to expand upon a topic.

- To shrink down their writing when it gets too verbose.

- To improve their writing when they feel it lacks a wide variety of vocabulary.

- To adjust the tone of the narrative in their writing to fit the context.

- As a copywriting tool to generate multiple articles on the fly.

- Similar to a search engine and ask various questions related to their interested subject.

- To draw inspiration and get past writer’s block.

- To create effective posts and create engagement.

There are many benefits to utilizing this new technology. Like any other tool, it makes any task associated with text significantly easier if used correctly.

GPT-3 can write functional code that can be executed without mistake with just a few snippets of example code text, as programming code is nothing more than a type of text.

Additionally, GPT-3 has been effectively applied to create website mockups. One developer has merged the UI prototyping software Figma with GPT-3 to enable the creation of webpages with only a little amount of user-given text.

Even website clones have been created with GPT-3 by using a URL as the prompt text.

In addition to creating code snippets, regular expressions, plots, and charts from text descriptions, Excel functions, and other development software, developers use GPT-3 in a variety of ways, such as creating conversations, quizzes, comic strips and various fiction.

Anything with text, GPT-3 could pretty much do at an above-average precision.

So What Can We Expect From GPT-4?

Optimization

The CEO of OpenAI, Sam Altman, mentioned during an online meeting that the new GPT-4 will not be much larger than GPT-3 and will have around 175 to 280 billion parameters. It seems the company is taking directions for fine-tuning and efficiency rather than sheer model size and robustness. The new generation will be focused more on optimization and cost-effectiveness whilst still outperforming its prior generations.

Text-only

Although the invention of DALL-E based on GPT-3 was an amazing discovery, GPT-4 will not be multimodal, meaning it will only focus on manipulating and generating text based on text inputs rather than anything visual/image-based.

Sparsity

Sparsity refers to models that use conditional processing to handle more data. Models with such features are easily scalable without incurring too much processing cost. However, the upcoming GPT-4 will be a dense model, meaning that it would have no sparsity, utilizing all parameters regardless of the type of job.

Alignment

For us humans, conversations and stories are always in context and make sense. AI text generators have always been hit-and-miss with rationalization. GPT-4 will be implementing the lessons it learned from InstructGPT (human feedback based GPT model) so the generated text will be more coherent for human understanding.

The Impact of GPT-4 On Companies And Consumers

Given the current state of GPT-3, being used as a base model for multiple successful companies and appealing to the public eye due to its capability to handle anything related to writing and texts, the news of GPT-4 possibly being released has been highly compelling to say the least.

GPT-4 for Content Writers

- Larger quantity of AI generated articles and blog posts.

- AI assisted writing tools will improve drastically, leading to more essays, stories, books and researches made with their assistance.

- AI assisted text manipulation will improve drastically, making it simpler to summarize or expand or change narration voice in texts.

- Creative stories and outlining assistance will improve.

Naturally, any and all content writers will have direct access to an even better GPT model which can produce precise and ‘large’ amounts of text in just a few moments. This will be extremely crucial to regulate as the internet will be cluttered with AI generated content more than it has ever been in the past.

Essays and research papers regarding widely known topics will be extremely easy to generate, more than it is now.

But despite that, the upcoming GPT-4 will be a tremendously beneficial piece of technology for writers as it can provide endless prompts and paraphrases, not to mention the text manipulations.

GPT-4 for Developers

- Coding basic functions using AI will be more reliable.

- Some software will be written entirely by GPT-4.

- Coding solutions and optimizations will be possible.

- Automation will be simpler.

- Basic programming can be done entirely automatically.

GPT-4, like its predecessor and Codex, uses NLP to generate codes in languages you prefer, translating and understanding your prompt to output the correct code. With precise instructions, it will be possible for coders to automate the process of coding using their own customizations for simpler tasks. It could even serve as a code optimization tool if applied correctly.

GPT-4 for Creatives/Marketing

- Songs and poems written by AI will be of higher quality.

- Such services will become much more popular due to GPT-4’s improved quality.

- Websites and copywriting services will increase in numbers.

- Marketing hooks and post/video descriptions can then be automated.

- Due to the alignment improvements, GPT-4 will be much more marketable and safer for businesses.

With its improvement in the coherence attribute, GPT-4 will no doubt be an interesting model for any and all individuals. For those who are looking for inspiration, variances in story and possible ideas, the new generation of GPT will be crucial. Marketing and blog posts will be structuralized and automated with the help of GPT-4.

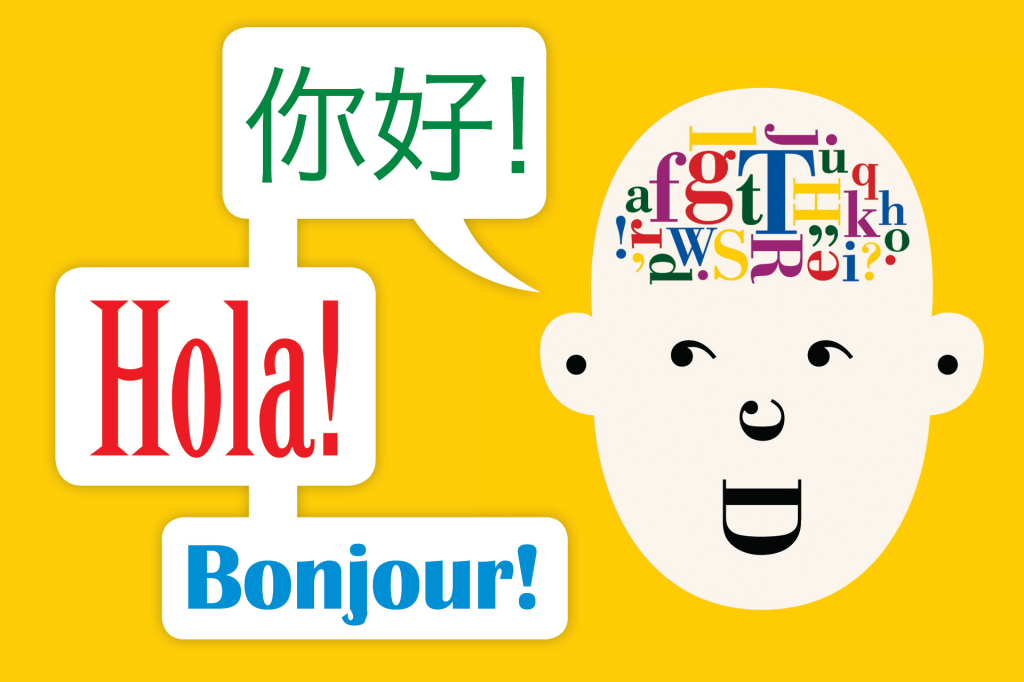

GPT-4 for Translators

- Language handling will improve drastically in quality.

- With increased coherence and alignment, language translation will become more reliable.

- Complexity and in-context translation prompts will be able to be handled.

With the help of GPT-4, translation services will be made vastly easier due to the multiple language handling of the new model. Although majority of the complex phrases and contextualization exist solely in English, GPT-4 will no doubt outperform its predecessor in this category as well.

GPT-4 Generally speaking

GPT-4’s release won’t revolutionize or redefine the term AI. However, it will improve upon previous weaknesses by utilizing the knowledge gained from valuable experience, thus resulting in a vastly increased accuracy, complexity handling and coherency.

The average person could use GPT-4 for daily tasks such as job reports, shooting an email to a coworker, paraphrasing their writing et cetera.

One thing is for sure, the model will be picked up and implemented across various platforms for specific purposes and with its improvements, it will result in a greater surge of dynamic, general writing generations than now.

GPT-3.5 (ChatGPT)

Recently, OpenAI revealed GPT-3.5 whilst the general populace waits for the highly anticipated GPT-4. This does not delay nor take anything away from the excitement of GPT-4. In fact, it only adds to the expectation.

It is a fine-tuned chatbot version of GPT. A general-purpose chatbot that is capable of simulating natural human language.

According to the OpenAI team, ChatGPT’s (GPT-3.5) text-davinci-003 is a bit similar to InstructGPT but far more aligned and ironed out. This means the model hallucinates less, and does not include severe bias or toxicity in its outputs.

Data scientists have tried out its features, reporting back drastic improvements in prompt understanding, text structure, poem and phrasing generations. It is also better at generating blog posts and business articles due to its SFW and well-structured generations.

Conclusion

It seems the rumors of GPT-4 having trillions of parameters were truly just rumors. The next model in the series seems more in line with reality as it significantly improves its capabilities compared to its predecessors whilst optimizing storage, processing, and cost.

And with the advent of GPT-4, another spike in AI popularity will emerge, leading to an increased market capital with onlookers eager to join in. Many new businesses will be formed, new tools will be made available.

Whether it be the usage of chatbots for dynamically automated customer care or fully generated university-level essays, the usage of GPT-4 will undoubtedly be vast and we await with eagerness.