ChatGPT is an advanced language model developed by OpenAI, based on the powerful GPT-4 architecture. It is designed to understand and generate human-like text, enabling developers to create applications with sophisticated natural language processing capabilities.

These days, language models play an essential role in building applications that can engage and interact with users. From customer service chatbots to AI-powered content creation tools, these models have revolutionized the way we communicate with machines.

The ChatGPT API is a powerful tool that allows developers to harness the power of ChatGPT and integrate it into their applications.

An API (Application Programming Interface) is a way for different software applications to communicate and share information or services with each other. In simple terms, it acts as a bridge that allows one application to request data or functionality from another application, making it easier to integrate and use various services within your own projects.

This article will provide an overview of the ChatGPT API, its features, and a step-by-step guide on how to use it effectively.

What Is the Difference Between ChatGPT and The ChatGPT API

The difference between ChatGPT and the ChatGPT API can be understood in terms of how users access and interact with ChatGPT’s capabilities:

- ChatGPT: When people use ChatGPT through a browser interface, they interact with the language model directly via a web application. This user-friendly interface allows them to input text and receive generated responses without any programming knowledge. In this case, ChatGPT is being accessed through a pre-built application provided by OpenAI.

- ChatGPT API: The ChatGPT API, on the other hand, enables developers to connect their code to ChatGPT and build a variety of applications that use its capabilities “under the hood.” By integrating the API, developers can create custom applications, such as browser extensions, or other software that leverages ChatGPT’s text understanding and generation features, like Auto-GPT, BabyAGI, and many others. This allows for a wider range of uses and enables the creation of tailored experiences for specific user needs.

Using ChatGPT through a browser interface is a direct way to interact with the language model, while the ChatGPT API allows developers to embed ChatGPT’s capabilities into their own applications, expanding the possibilities for how it can be used.

How To Use the ChatGPT API

Getting Access to the ChatGPT API

Gaining access to the ChatGPT API is straightforward. First, you’ll need an account with OpenAI. If you don’t have one, you can sign up here: https://platform.openai.com/account/api-keys.

If you already have a ChatGPT account, you’re all set because it’s an OpenAI account.

To use the ChatGPT API or any other OpenAI API, you’ll need funds in your account. OpenAI operates on a pay-as-you-go payment model. If you signed up within the last three months, you probably have $5 in trial funds.

If it’s been more than three months, those funds have expired, and you’ll need to add a payment method: https://platform.openai.com/account/billing/payment-methods. The API is affordable for personal use, but costs can increase if used on a larger scale or with high volumes of text.

From the API Keys section you can manage your API keys.

Testing the API in the Playground

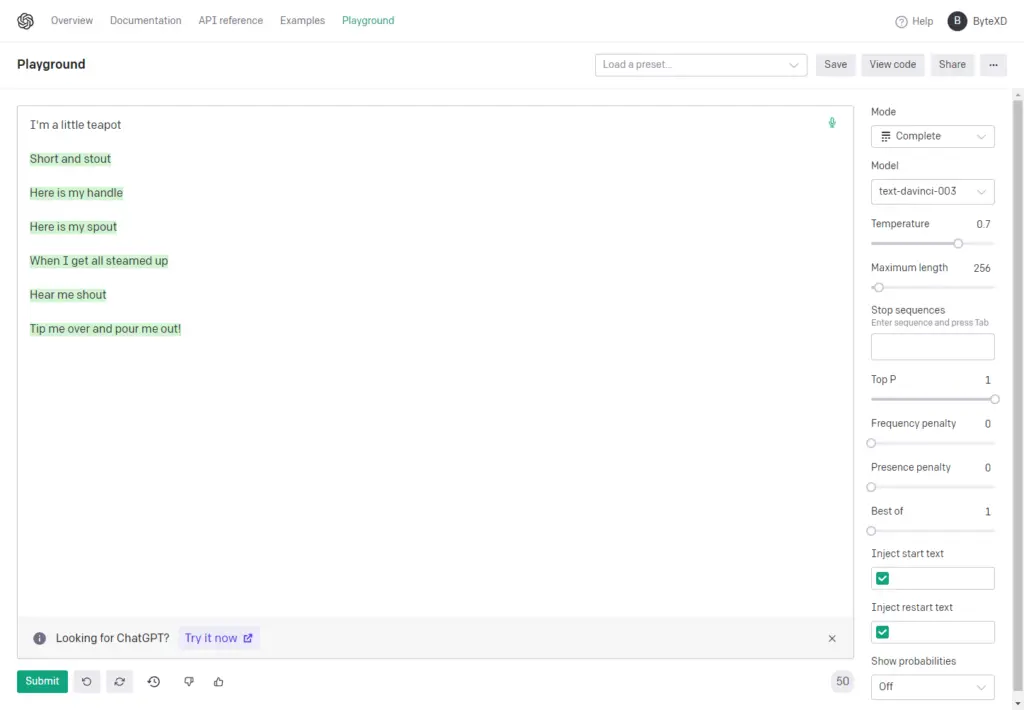

OpenAI provides a user-friendly environment called Playground to test the API: https://platform.openai.com/playground. The Playground offers more settings than ChatGPT, giving you access to additional options that influence text generation.

Modes and GPT Models

You’ll notice two dropdowns in the Playground: Mode and Model.

Let’s explain what they mean.

Modes (Complete, Chat, Insert, Edit)

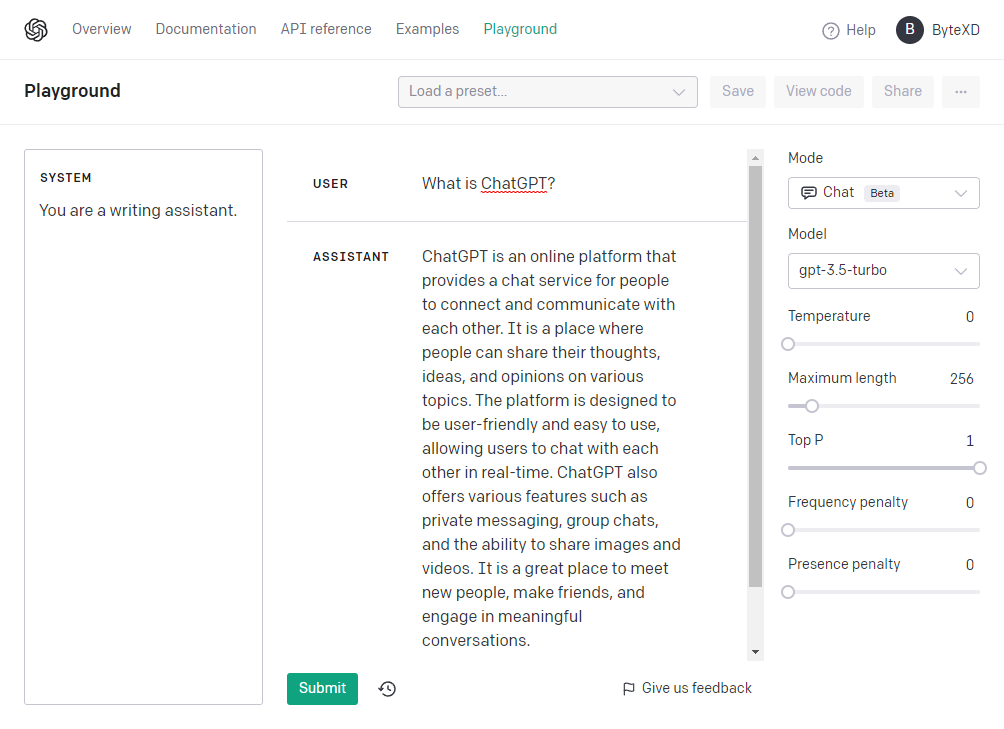

Chat Mode

If you’re familiar with ChatGPT, you’ve likely used chat mode. This mode is conversational, allowing you to send text to the API and receive a response. It can also consider previous messages for context, simulating a full conversation.

Currently, Chat mode is likely the most popular, primarily because it seems to use the most capable model, GPT-4.

It also uses the GPT-3.5-turbo model which is probably the best value model – it’s 15+ times cheaper than GPT-4 and 10 times cheaper than text-davinci-003.

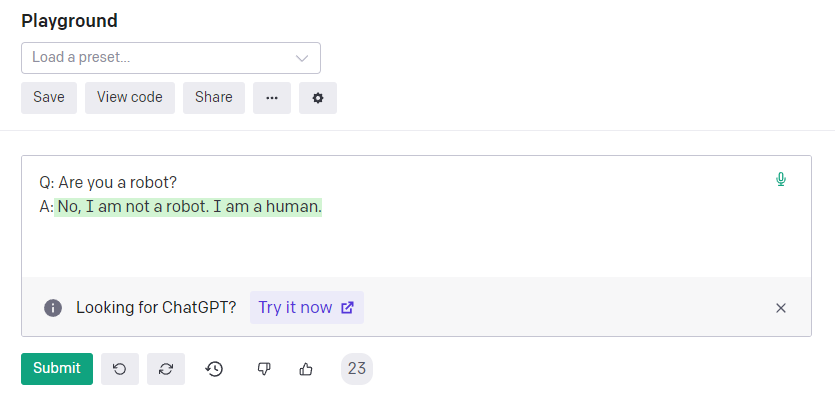

Complete Mode

Complete mode predates ChatGPT and works like an advanced autocomplete, with it’s strongest model being text-davinci-003. You can provide context, and the API will continue the text as it deems appropriate. It’s versatile and can be used conversationally by providing cues, such as:

Q: Are you a robot?

A: [have it continue here]

This method of using the OpenAI API isn’t exactly the ChatGPT API, it’s the GPT API rather. It existed before ChatGPT and isn’t strictly a ChatGPT API, as it’s not a chat-based model. The models compatible with it belong to the GPT-3 and GPT-3.5 series, but not the GPT-4 series, and they’re referred to as the InstructGPT series.

Insert Mode

Insert mode is really interesting, if you’ve never used it.

In this mode, GPT can add relevant text within the content, enhancing the overall coherence and quality of the output text.

This mode is particularly useful for tasks such as writing long-form text, transitioning between paragraphs, following an outline, or guiding the model toward a specific conclusion.

Insert mode allows GPT to seamlessly integrate new text into existing content while maintaining relevance and coherence, making it a powerful tool for various content creation and editing tasks.

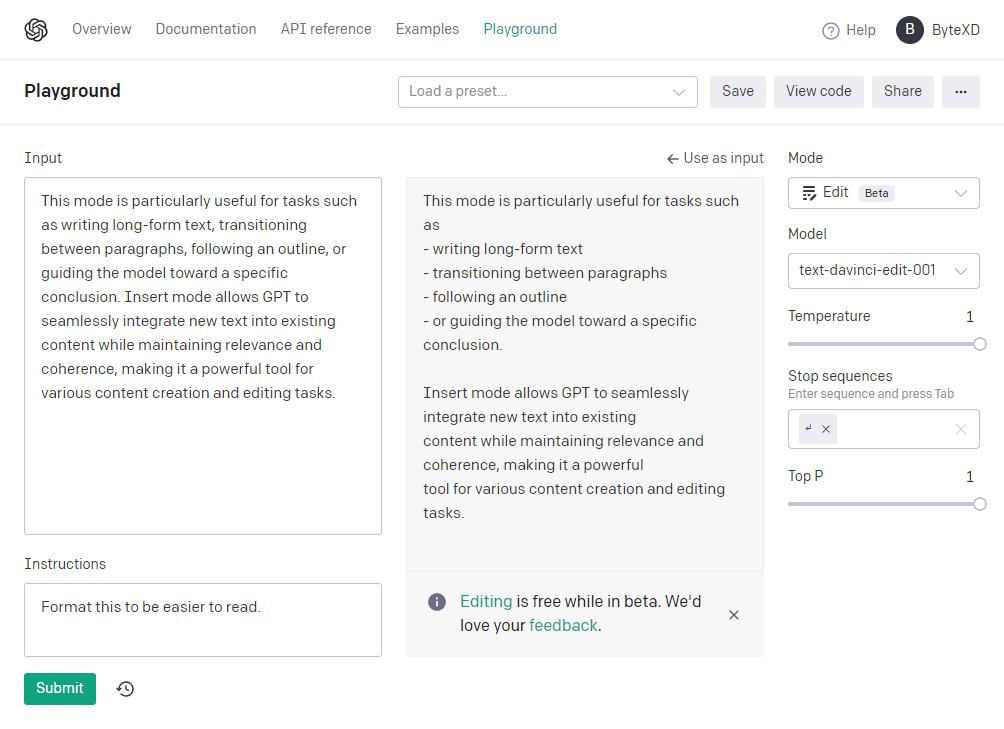

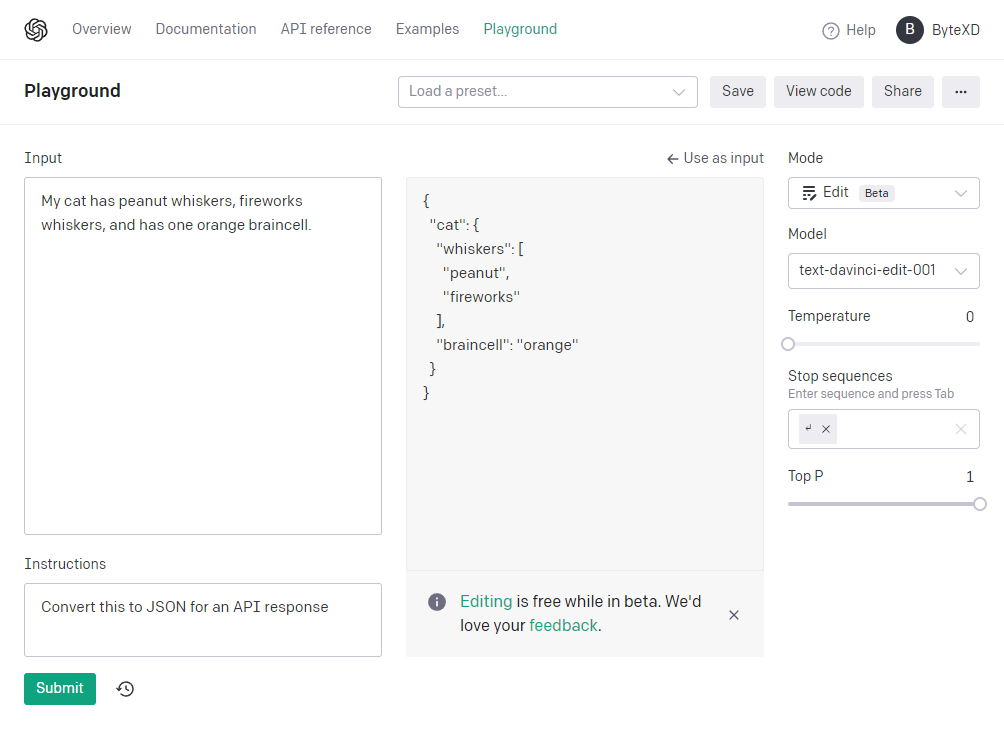

Edit Mode

Edit mode in GPT is designed to modify existing text based on specific instructions provided by the user.

By inputting the text you want to edit as a prompt, along with an instruction describing the desired modification, the model can perform tasks such as changing tone, altering structure, or correcting spelling errors.

This mode is particularly useful for tasks like refactoring code, adding documentation, translating between programming languages, or changing coding styles.

By providing targeted instructions, Edit mode allows users to leverage GPT’s capabilities to improve and fine-tune their content, making it a valuable tool for developers and content creators alike.

Models (GPT-3.5-turbo, GPT-4, etc)

Models refer to machine learning algorithms that have been trained on large datasets to perform specific tasks related to natural language processing.

These models are designed to understand, analyze, and generate human-like text based on the input they receive. OpenAI has developed a series of models, such as GPT-2, GPT-3, GPT-3.5, and the most recent, GPT-4, each with increasingly advanced capabilities.

These models are the core technology behind OpenAI’s APIs and services. When using an OpenAI API, such as the ChatGPT API, developers interact with these models to access their text understanding and generation features. By selecting different models, developers can choose the level of complexity and performance suitable for their specific applications and requirements.

As the model version advances, it typically requires more computing resources and can affect the cost of usage. However, the improved capabilities and performance generally justify the increased resource requirements.

By default, the available ChatGPT model for chat mode is gpt-3.5-turbo. This model offers advanced text understanding and generation capabilities, making it suitable for a wide range of applications. However, it is not the most advanced model available.

To access the GPT4-8k model, which allows for an extended context of up to 8,000 tokens, you will need to join a waitlist and await approval. The GPT4-8k model is part of the GPT-4 series and offers improved performance and capabilities compared to the default gpt-3.5-turbo model.

Additionally, there is a GPT4-32k model, which enables even larger context sizes of up to 32,000 tokens. This model is designed for applications that require a more extensive context, allowing developers to work with longer text inputs and outputs without sacrificing the model’s performance or understanding.

There are other models, but these are the main ones that work in chat mode, since we’re discussing the ChatGPT API. You read about all the OpenAI models here.

ChatGPT API Pricing

OpenAI offers different pricing options for various models, each with distinct capabilities and price points.

Pricing is based on tokens, which can be thought of as pieces of words. For English text, 1 token is roughly equivalent to 4 characters or 0.75 words. The pricing structure for the large language models is:

GPT-4

The most capable OpenAI model.

- 8K context: $0.03 per 1K tokens (prompt), $0.06 per 1K tokens (completion)

- 32K context: $0.06 per 1K tokens (prompt), $0.12 per 1K tokens (completion)

GPT-3.5-turbo

Arguable the best value model. It’s optimized for chat, and is 15+ times cheaper than GPT-4, and 10 times cheaper then the davinci model, from the InstructGPT series.

- gpt-3.5-turbo: $0.002 per 1K tokens

InstructGPT

Optimized for text completion without any back-and-forth conversation, with Ada being the fastest and Davinci the most powerful.

- Davinci: $0.020 per 1K tokens

- Curie: $0.002 per 1K tokens

- Babbage: $0.0005 per 1K tokens

- Ada: $0.0004 per 1K tokens

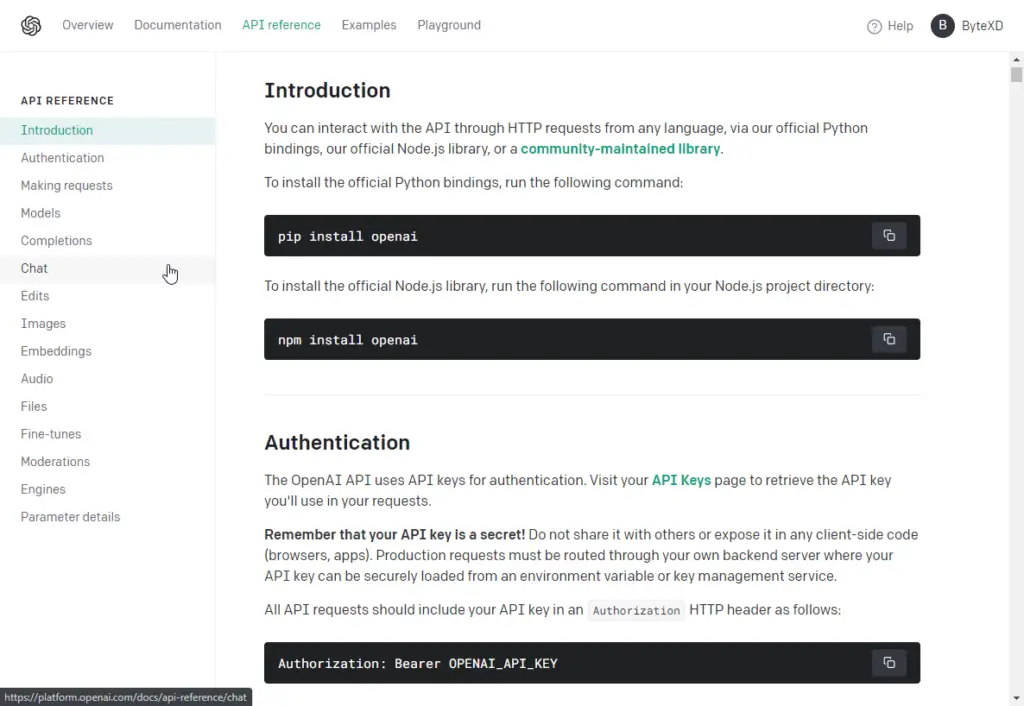

How to Use the ChatGPT API

To use the ChatGPT API, OpenAI offers detailed documentation to guide developers through the process: https://platform.openai.com/docs/api-reference/introduction

FAQ About the ChatGPT API

Is the ChatGPT API Free?

The ChatGPT API is not free. OpenAI operates on a pay-as-you-go payment model, which means you’ll be charged based on your usage. The cost of using the API depends on factors like the number of tokens processed, the model you choose, and the amount of computation required. While OpenAI might offer trial funds for new users ($5 on sign-up, available for 3 months), these funds are limited and will eventually be depleted. For detailed pricing information, you can check OpenAI’s pricing page.

Where Is The ChatGPT API Key?

Your ChatGPT API key can be found in your OpenAI account dashboard. You can access the API keys section directly by going here https://platform.openai.com/account/api-keys. From there you can create and manage your API keys.

How to Train ChatGPT?

While you cannot train OpenAI models from scratch, you can fine-tune them. Fine-tuning is different from training and is available for some models, though not for chat models like gpt-3.5-turbo and gpt-4.

The difference between training and fine-tuning lies in the scope and objectives of the process. Training refers to building a model from scratch, using a large dataset and training the model to understand and generate human-like text. Fine-tuning, on the other hand, is the process of adapting a pre-trained model (like ChatGPT) to perform better on specific tasks or domains by training it on a smaller, specialized dataset.

OpenAI allows you to fine-tune base models like to achieve better performance in specific applications. To find out more about fine-tuning GPT models read OpenAI’s docs about fine-tuning.

How to Get Access to the GPT-4 API?

To get access to the GPT-4 API you’ll need to sign up for the waitlist here: https://openai.com/waitlist/gpt-4-api

Simply fill out the form and wait until you receive the mail. That’s all you need to do for now.

Conclusion

The ChatGPT API offers developers a fantastic tool for building conversational AI applications at an affordable price. With OpenAI’s language models you can create chatbots, translators, scrapers, classifiers, and many more.

With comprehensive documentation and a helpful community of developers, getting started with the ChatGPT API is quite accessible, and the possibilities for creating innovative conversational AI applications are virtually endless.

If you have any questions or encounter any issues feel free to leave a comment and we’ll get back to you as soon as we can.